You are not gathering evidence to discover the truth. You are gathering evidence to confirm what you already believe — and discarding the rest. This is not a personal failing. It is the default operation of the human mind, running in every domain where you have prior beliefs, emotions, or preferences. Confirmation bias is the most pervasive cognitive bias affecting human judgment, and understanding it precisely — not just acknowledging its existence — is what allows you to actually counteract it.

What Is Confirmation Bias?

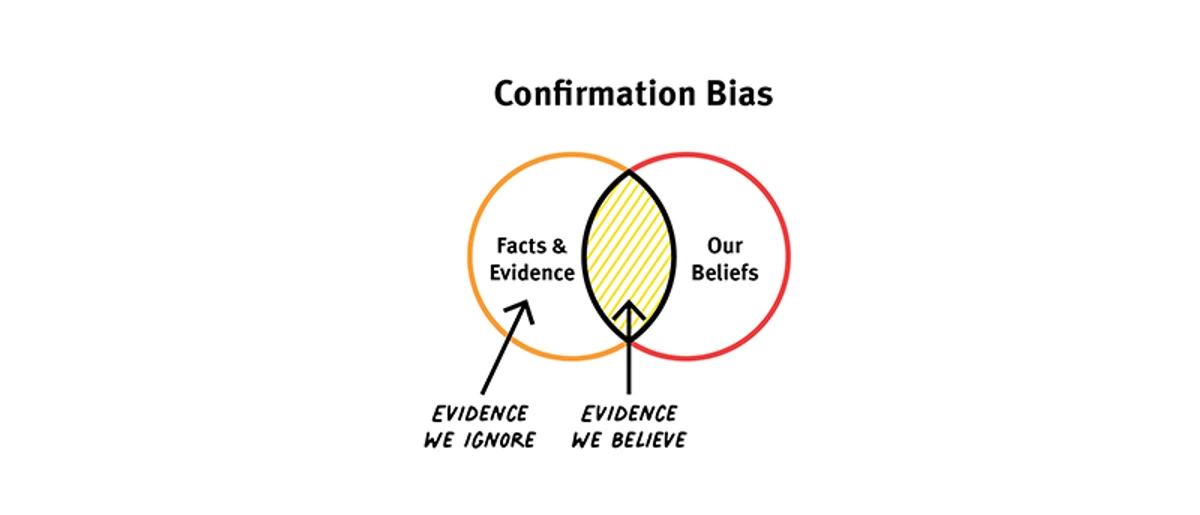

Confirmation bias is the tendency to search for, interpret, favor, and recall information in a way that confirms or supports one's prior beliefs, hypotheses, or values. It operates at every stage of information processing: in what you seek out, in how you interpret what you find, and in what you remember afterward.

The term was coined by English psychologist Peter Wason in the 1960s, based on his research with the "2-4-6 task" — an experiment that revealed with striking clarity how people test hypotheses. Participants were shown the sequence 2-4-6 and told it followed a rule. They were asked to discover the rule by proposing other sequences. The experimenter would tell them whether each sequence followed the rule.

Wason's 2-4-6 Task

Most participants immediately hypothesized that the rule was "consecutive even numbers" or "numbers increasing by two." They then tested this hypothesis by proposing sequences like 4-6-8, 8-10-12, and 20-22-24 — all of which the experimenter confirmed.

Satisfied, they announced their rule. They were wrong. The actual rule was simply "any three numbers in ascending order." Sequences like 1-2-3 or 5-17-244 would also have been confirmed — but almost no one proposed them, because those sequences would have disconfirmed the hypothesis, and the mind was not searching for disconfirmation. It was searching for confirmation of what it already believed.

The Wason task demonstrates confirmation bias in its purest form: faced with a hypothesis and an infinite space of possible tests, people overwhelmingly choose tests that would confirm their hypothesis if true — rather than tests that would reveal whether their hypothesis is false. This pattern is not limited to laboratory experiments. It is the default mode of human inquiry in every domain where prior beliefs exist.

The Three Mechanisms of Confirmation Bias

Confirmation bias operates through three distinct but related mechanisms, each of which can distort judgment independently and which compound when they operate together.

1. Biased Information Search

When seeking information, people disproportionately look for sources, studies, and evidence that support their existing beliefs. A person who believes a particular investment is sound will seek research confirming its value; they will not search with equal effort for research suggesting it's overvalued.

This mechanism operates before any evidence is even encountered — it shapes what enters the information environment in the first place.

2. Biased Interpretation

When exposed to mixed evidence — some confirming, some disconfirming — people apply different standards of scrutiny to each type. Confirming evidence is accepted at face value; disconfirming evidence is subjected to skeptical scrutiny, looking for methodological flaws, alternative interpretations, or reasons to discount it.

The same evidence quality produces different conclusions depending on whether it confirms or challenges prior beliefs.

3. Biased Memory

When recalling past experiences and information, people more easily and more accurately remember evidence that supported their beliefs than evidence that challenged them. Memory is reconstructive, not reproductive — and the reconstruction is shaped by current beliefs.

This creates a feedback loop: biased memory reinforces current beliefs, which then bias future search and interpretation.

The three mechanisms reinforce each other in a closed loop. Biased search means confirming evidence is more likely to enter the information environment. Biased interpretation means confirming evidence is more likely to be accepted. Biased memory means confirming evidence is more likely to be retained and recalled. The result is a belief system that feels well-supported by evidence — because it systematically selected for the evidence that supports it and discarded or discounted everything else.

This is why confirmation bias is so difficult to detect in yourself. The process doesn't feel biased from the inside — it feels like careful consideration of the evidence. The evidence you've seen does support your conclusion. What you can't see is the evidence you didn't look for, misinterpreted when you found it, and forgot after it failed to confirm your belief.

Why It Exists: The Evolutionary Story

Confirmation bias is not a malfunction. It is a feature — one that served important functions in the environment where human cognition evolved, even if it produces systematic errors in the modern information environment.

Cognitive Efficiency

The human brain processes approximately 11 million bits of information per second but can handle only about 40-50 bits consciously. Filtering is not optional — it is the fundamental operation of the cognitive system. Confirmation bias is one of the most efficient filters available: if you already have a working model of a domain, preferentially attending to information that fits that model is efficient in environments where the model is usually right.

In a stable environment where your beliefs were formed through genuine experience, confirmation bias keeps your attention on the most relevant information and allows fast action. The problem arises in novel, rapidly changing, or ideologically contested environments — exactly the environments modern humans inhabit — where prior beliefs are more likely to be wrong or incomplete.

The Social Function

Hugo Mercier and Dan Sperber's "argumentative theory of reasoning" proposes that human reasoning evolved not primarily to discover truth but to produce arguments that justify positions to others and evaluate others' arguments. On this account, confirmation bias is a feature of the reasoning system designed for social persuasion: when making a case to others, you look for confirming evidence because that's what persuades.

This theory explains why confirmation bias is stronger when people are emotionally or socially invested in their positions — it is most active exactly when the social function of reasoning is most engaged. It also explains why confirmation bias is somewhat weaker when people are evaluating others' reasoning rather than defending their own: the argumentative function calls for skeptical evaluation of incoming arguments while generating supporting arguments for your own position.

The Myside Bias Connection

Keith Stanovich's research on "myside bias" — a specific form of confirmation bias in reasoning — shows that it correlates very weakly with intelligence. People with higher IQs are not significantly less prone to myside bias than people with lower IQs. What intelligence does is make people better at constructing and defending arguments — which means smarter people may be more effective at confirmation bias, not less susceptible to it. The sophisticated reasoner is better at finding evidence that confirms their position, not better at finding evidence that challenges it.

The Real Costs of Confirmation Bias

Confirmation bias is so ubiquitous that it might seem like background noise — something everyone has, so it cancels out, and good reasoning emerges anyway. This is wrong. The costs are specific, measurable, and in many domains very large.

Bad Investment Decisions

Investors who fall in love with a thesis look for confirming evidence while discounting disconfirming signals. Research on professional analysts shows that after initiating coverage with a buy recommendation, analysts are substantially less likely to downgrade the stock when negative information emerges than they would be if they were evaluating the same information without prior commitment. The prior commitment activates confirmation bias, and the bias produces persistently optimistic assessments in the face of deteriorating fundamentals.

Medical Misdiagnosis

Premature diagnostic closure — settling on a diagnosis early in the evaluation process and then interpreting subsequent information as confirming it — is one of the most common sources of medical error. Once a physician has formed an initial diagnosis, confirmation bias makes subsequent symptoms, test results, and patient statements more likely to be interpreted as consistent with that diagnosis, even when they're not. The "anchoring" on the initial hypothesis is a direct manifestation of biased interpretation operating on life-critical decisions.

Relationship Deterioration

In relationships under stress, confirmation bias accelerates the deterioration. Once a person has formed a negative impression of a partner, friend, or colleague, they are more likely to notice and remember behavior that confirms the negative impression and more likely to attribute ambiguous behavior to the negative interpretation. The relationship doesn't just have problems — the cognitive architecture has been set to find evidence of problems and discount evidence of goodness.

Political and Social Polarization

The information environment of social media is optimized for confirmation bias — algorithmic feeds show you content that matches your existing views, creating echo chambers that feel like they're showing you "reality" while actually showing you a carefully curated subset of reality that confirms what you already believe. The result is not just individual misinformation but the collapse of shared factual reality across political and social groups — a systemic version of the individual-level mechanism.

Confirmation Bias in High-Stakes Domains

Investing and Finance

The investment domain is particularly vulnerable to confirmation bias because investment theses are typically formed before all evidence is available, emotional commitment to the thesis grows with the size of the position, and the social dynamics of fund management create incentives to maintain rather than revise positions. Value investors who understand this architecture deliberately seek out the bear case for every investment — not as a formality but as a genuine search for disconfirming evidence — because they know that without this discipline, their analysis will systematically underweight the evidence against their thesis.

This is precisely what inversion thinking provides as a systematic counterweight to confirmation bias in investment analysis: the deliberate construction of the strongest possible case against your thesis before you've committed to it.

Science and Research

Science has developed institutional mechanisms specifically to counteract confirmation bias: peer review, replication requirements, pre-registration of hypotheses, and the norm of publishing null results. These mechanisms exist because individual scientists are subject to the same confirmation bias as everyone else — they believe their hypotheses, they look for confirming evidence, and they interpret ambiguous data in the direction of their prior beliefs.

The replication crisis in psychology and medicine — the finding that many published results could not be replicated by independent researchers — is substantially a manifestation of confirmation bias operating within a scientific system that had insufficient safeguards. Researchers found what they were looking for, because the methods available to them gave enough flexibility in data collection and analysis to produce confirming results even when the underlying effect wasn't real.

Strategy and Business Decisions

Strategic planning processes are systematically distorted by confirmation bias because strategies are proposed by individuals who are invested in them. The evidence gathered in the planning process is more likely to be evidence that supports the strategy — competitive analyses that emphasize favorable market dynamics, customer research that confirms product-market fit, financial models with optimistic assumptions. The disconfirming evidence — the structural challenges, the competitive threats, the model assumptions most likely to be wrong — receives less attention precisely because the team generating the analysis is committed to the strategy it supports.

Why Smart People Are Not Immune

One of the most important and counterintuitive findings in the research on confirmation bias is that intelligence provides minimal protection against it — and in some domains actually amplifies it.

The mechanism: confirmation bias involves not just the tendency to seek confirming evidence but the ability to construct arguments justifying why disconfirming evidence should be discounted. Intelligent people are better at constructing these justifications. They find more sophisticated reasons to reject evidence they don't like, more creative alternative explanations for uncomfortable data, more elaborate arguments for why their prior beliefs should be maintained despite apparently contradictory evidence. This phenomenon — "smart people being better at motivated reasoning" — has been documented consistently across domains including politics, medicine, law, and finance.

The Intelligence Trap

Psychologist Jonathan Haidt describes moral reasoning as operating like a lawyer rather than a scientist: you start with the conclusion you want to reach, then construct arguments that support it. Intelligence makes you a better lawyer — better at constructing the supporting arguments — but it doesn't make you more likely to reach the correct conclusion if the conclusion is driven by motivated reasoning rather than evidence.

This is why expertise in a domain does not protect against confirmation bias within that domain, and may actually amplify it — experts have more sophisticated tools for interpreting evidence, which means they have more sophisticated tools for explaining away the evidence that doesn't fit their existing view.

The implication is direct: the antidote to confirmation bias is not more intelligence or more expertise. It is specific structural practices that make disconfirmation difficult to avoid — that force engagement with the disconfirming evidence rather than allowing it to be explained away. The practices work regardless of intelligence level because they change the structure of the inquiry, not the quality of the reasoning within it.

How to Counteract Confirmation Bias

The research on debiasing is clear on one point: simply knowing about confirmation bias does not reliably reduce it. Awareness helps somewhat, but the bias operates below the level of conscious intention — it shapes what you notice, how you interpret it, and what you remember, often before any deliberate reasoning occurs. Effective counterstrategies must therefore be structural, not merely attitudinal.

Active Disconfirmation

The most direct counterstrategy: deliberately seek evidence that would falsify your current belief. Not evidence that might be slightly inconsistent with it — evidence that, if found, would compel you to revise it. Ask explicitly: what would I need to see to conclude that I'm wrong about this? Then look for that evidence specifically, with the same effort and care you'd apply to finding confirming evidence.

This is the scientific norm of falsificationism applied to everyday reasoning. Popper's criterion for genuine knowledge — that it can be specified in advance what would falsify it — is also the criterion for genuine open-mindedness. If you cannot specify what would change your mind, you're not forming a belief based on evidence; you're maintaining a commitment regardless of evidence.

Action Steps

- State your current belief precisely. Vague beliefs are impossible to falsify. "Company X is a good investment" is too vague. "Company X will generate 15%+ annual returns over the next five years because of Y mechanism" is specific enough to test.

- Specify what would falsify it. What evidence, if found, would compel you to conclude the belief is wrong? If you can't answer this, you don't have a belief — you have a conviction.

- Actively search for that evidence. Not as a formality, but as a genuine search. Spend at least as much time looking for disconfirming evidence as confirming evidence.

- Apply equal scrutiny standards. When you find disconfirming evidence, resist the impulse to immediately look for methodological flaws. Apply the same level of critical scrutiny to confirming and disconfirming evidence.

- Update proportionally. When disconfirming evidence is found and survives scrutiny, update your belief proportionally to the strength of the evidence. Not wholesale revision — Bayesian updating, proportional to the evidence weight.

The Pre-Mortem as Debiasing Tool

Gary Klein's pre-mortem technique — imagining that a decision has already failed and asking why — is specifically effective against confirmation bias because it frames the exercise as explanation rather than evaluation. When asked to evaluate a plan, the mind is in advocate mode and confirmation bias is at full strength. When asked to explain why a plan failed (in imagination), the mind shifts to diagnostic mode, which activates different cognitive processes and surfaces disconfirming considerations that the advocate mode would have suppressed.

Seek Out Genuine Disagreement

The most reliable source of disconfirming evidence is people who genuinely disagree with you and are capable of articulating why. Not devil's advocates — people playing the role of disagree as an exercise. Genuine disagreement from someone who has examined the same evidence and reached a different conclusion is the most valuable input for calibrating your own beliefs.

This requires seeking out genuine disagreement rather than the strawman version of disagreement that the confirming mind naturally constructs. A genuine disagreer who knows their subject is one of the most valuable epistemic resources available — and the discomfort of engaging with them is reliable evidence that they're challenging something you'd prefer not to question. That discomfort is a signal worth following, not avoiding. The circle of competence framework applies here: genuine experts in an adjacent domain often see disconfirming angles that insiders miss precisely because they're not inside the confirming ecosystem.

Confirmation Bias in Organizations

Individual-level confirmation bias aggregates into organizational-level epistemic failure when the organizational culture, incentive structure, or decision process amplifies rather than counteracts the individual biases of its members.

HiPPO: The Highest Paid Person's Opinion

In hierarchical organizations, there is a documented tendency for information to be filtered upward in ways that confirm the senior person's existing views. People who work for a strong-opinioned leader learn quickly which conclusions are welcome and which are not. The information that reaches the decision-maker is systematically biased toward what confirms their current beliefs — not through deliberate dishonesty but through the accumulated effect of hundreds of small choices about what to emphasize, what to omit, and how to frame.

The result is that senior leaders in hierarchical organizations are often operating on more confirmation-biased information than their direct reports — because the filtering is upward, not downward. The most important decisions are made by the people with the most distorted information flow. This is why the best organizational leaders actively work to counteract this dynamic — creating channels for dissent, rewarding the delivery of bad news, and being visibly curious about evidence that contradicts their current view.

Groupthink as Collective Confirmation Bias

Irving Janis's research on groupthink — the phenomenon where cohesive groups make poor decisions through suppression of dissent and illusion of unanimity — is substantially a description of confirmation bias operating at the collective level. The group, like the individual, seeks confirming information, interprets ambiguous information in the direction of existing group beliefs, and suppresses or marginalizes members who introduce disconfirming views.

The structural antidotes — designated devil's advocates, separate evaluation teams, leader abstention from early position-taking — are all mechanisms to force disconfirming inputs into the group process before the social dynamics of confirmation have closed the group off to them.

Building Disconfirmation as a Practice

Counteracting confirmation bias is not a one-time intervention — it requires building structural practices that make disconfirmation a regular part of how you engage with evidence and update beliefs. The goal is not to eliminate all prior beliefs (which is impossible and would produce paralysis) but to maintain genuine openness to revision when disconfirming evidence meets a reasonable evidential threshold.

The Belief Inventory

Periodically review your most important beliefs — about your industry, your strategy, your relationships, your own capabilities — and ask for each: when did I last seriously engage with evidence that challenges this belief? If the answer is "never" or "not recently," the belief is a candidate for the disconfirmation protocol. Not because it's wrong — it may be entirely correct — but because an unchallenged belief is an unexamined one.

The Asymmetric Scrutiny Check

When evaluating evidence, ask explicitly: am I applying the same level of skepticism to this as I would to evidence pointing in the opposite direction? If a study confirming your investment thesis would be accepted, would you accept a study of equal quality that challenged it? If not, you're applying asymmetric scrutiny — which is confirmation bias in action.

Tracking Prediction Accuracy

One of the most effective long-term antidotes to confirmation bias is maintaining a record of your predictions and their outcomes. Confirmation bias survives partly because memory is biased — you remember your correct predictions more vividly than your incorrect ones, which maintains an inflated sense of your accuracy. A written prediction record, tracked honestly, reveals your actual calibration and surfaces the domains where confirmation bias is producing the largest errors. This is the same calibration practice recommended by Philip Tetlock's superforecaster research — and it works precisely because it bypasses the biased memory that allows confirmation bias to persist uncorrected.

The Epistemic Commitment

Counteracting confirmation bias ultimately requires an epistemic commitment that is uncomfortable: the genuine willingness to be wrong. Not the performed willingness to be wrong — the real thing. The acknowledgment that your current beliefs, however carefully formed, are hypotheses rather than conclusions, subject to revision when evidence warrants it.

This commitment is rarer than it sounds. Most people say they're open to being wrong while behaving as if being wrong is a threat to be defended against rather than information to be incorporated. The difference is visible in behavior: the genuinely open person seeks disconfirming evidence with the same energy as confirming evidence, updates visibly when it's found, and treats the revision of a prior belief as progress rather than defeat.

Building this orientation — combined with the inversion framework for structured disconfirmation, the second-order thinking for tracing where confirmation bias leads downstream, and the first principles approach for stripping away accumulated assumptions — produces the kind of reasoning that updates correctly when the world changes rather than persisting in comfortable but increasingly wrong beliefs.